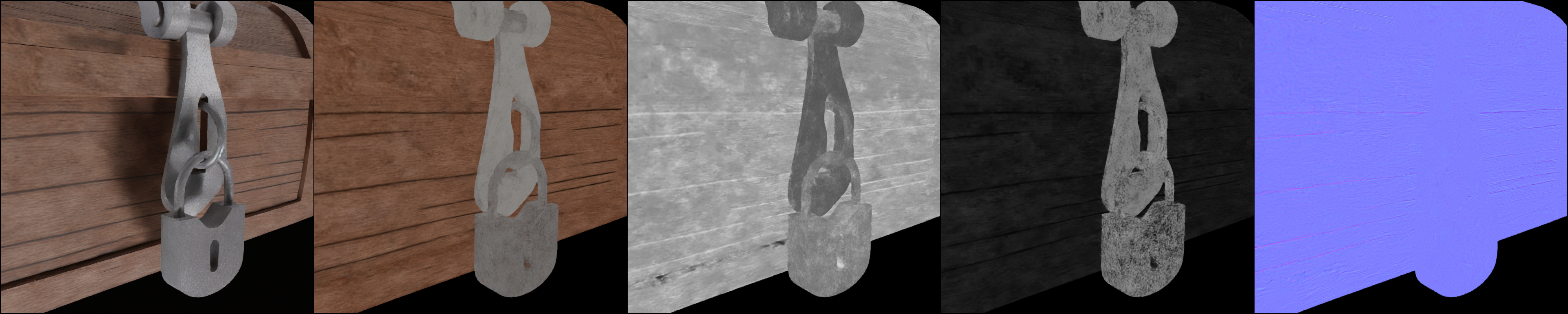

Current 3D content generation builds on generative image models that output RGB only.

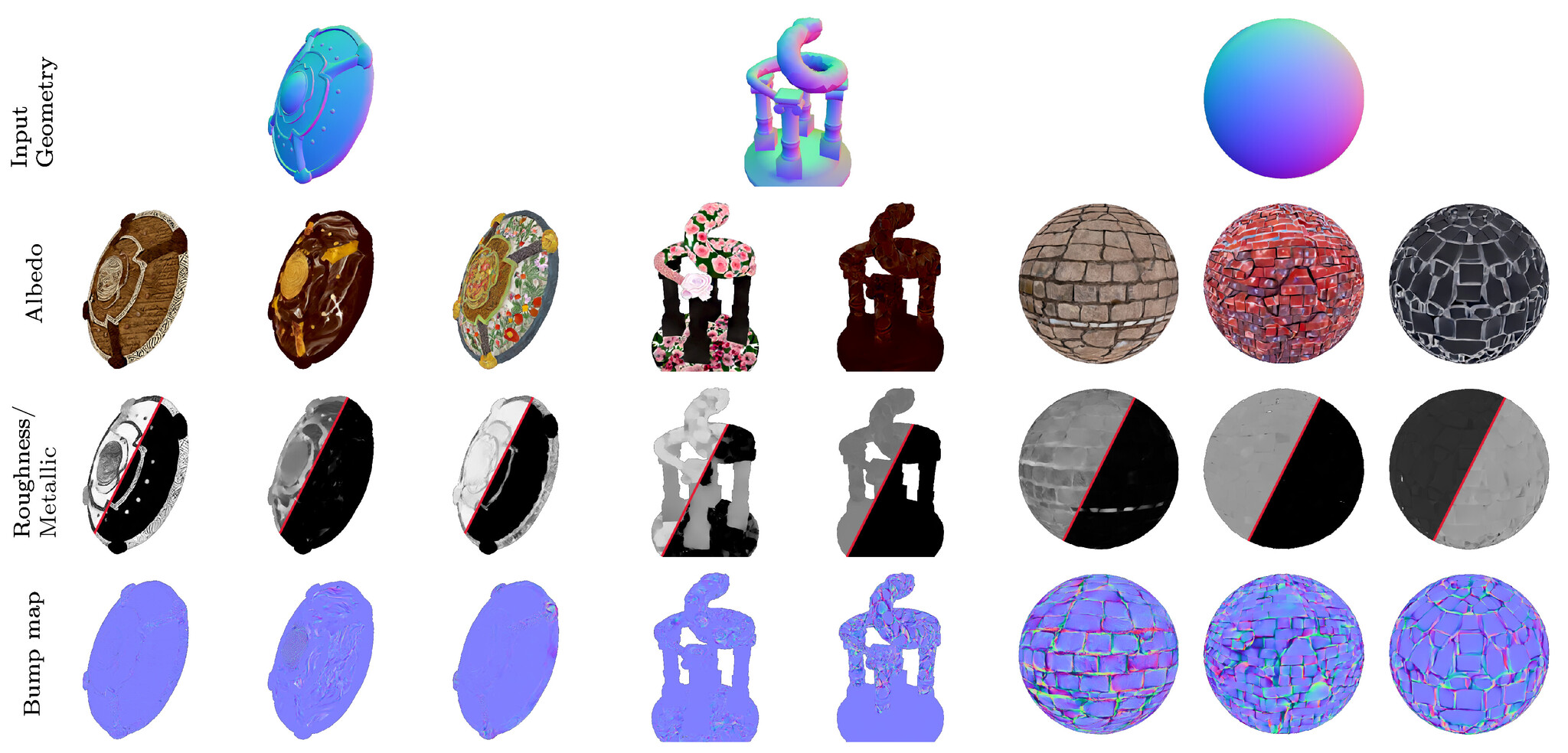

Modern graphics pipelines, however, require physically-based rendering (PBR) material properties.

We propose to model the PBR image distribution directly, sidestepping photometric inaccuracies in RGB

generation and the inherent ambiguity in extracting PBR from RGB.

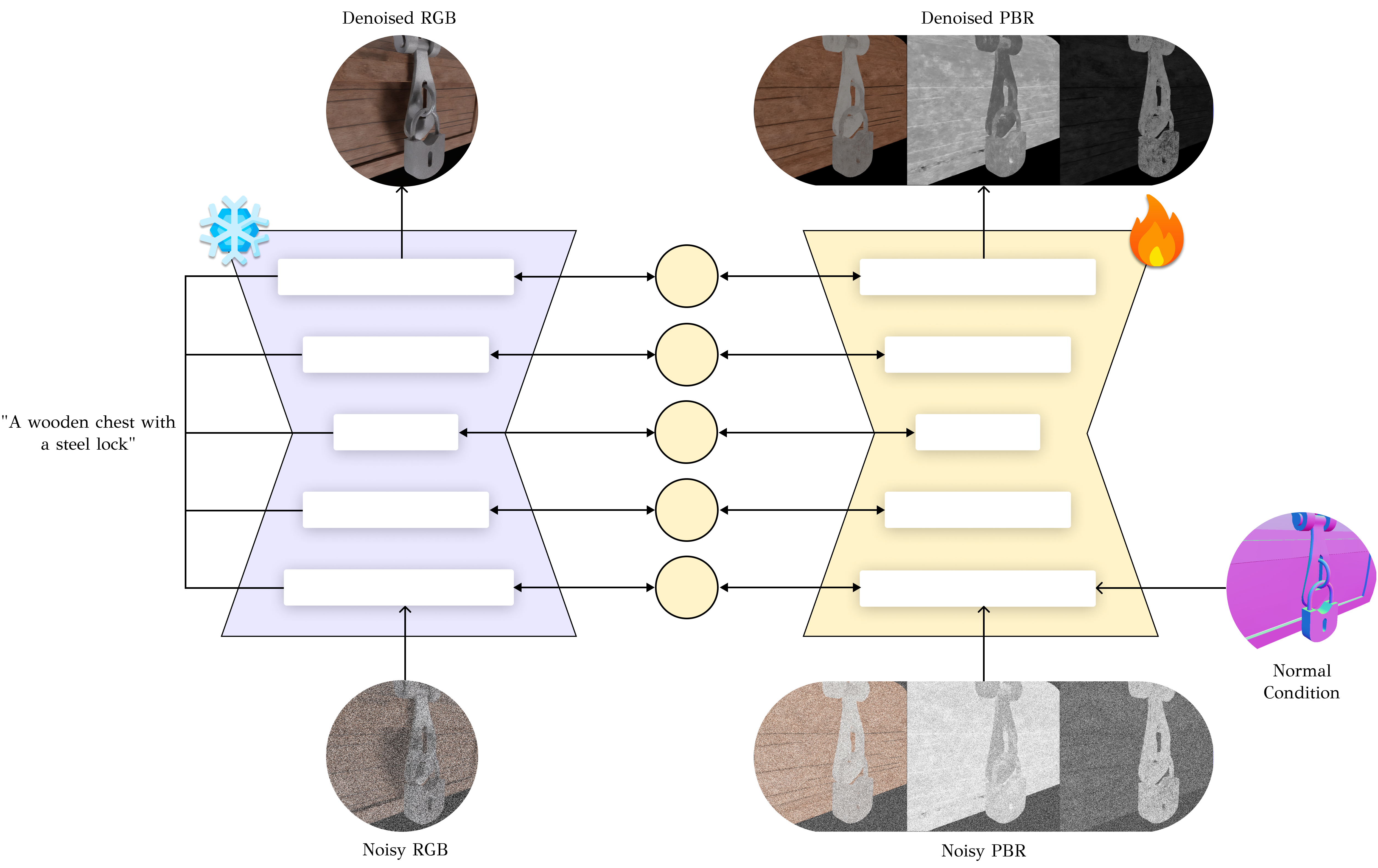

Existing approaches leveraging cross-modal finetuning are limited by the lack of data on one hand and the

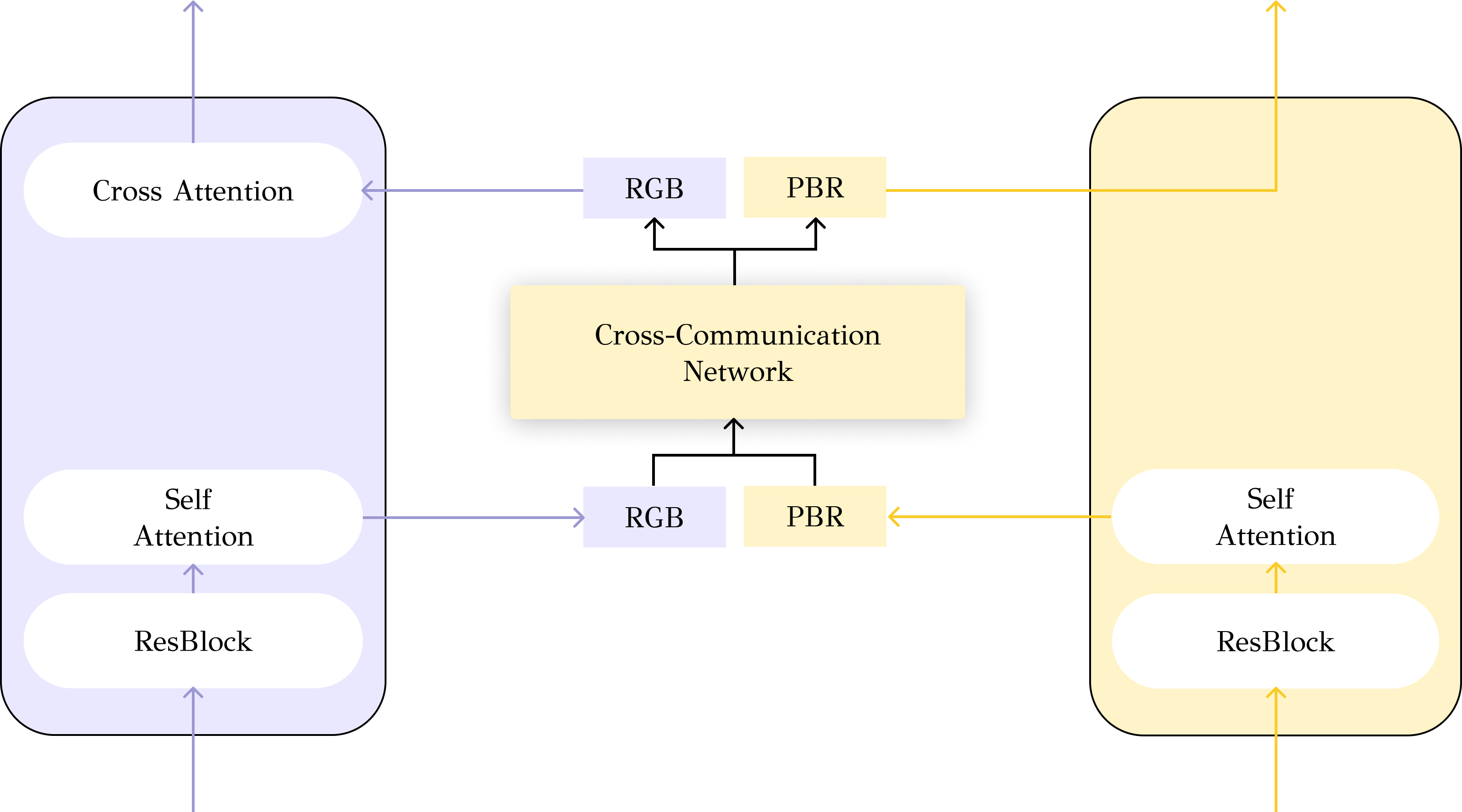

high dimensionality of the output modalities on the other: we overcome both challenges by keeping a frozen

RGB model and tightly linking a newly trained PBR model using a novel cross-network communication

paradigm.

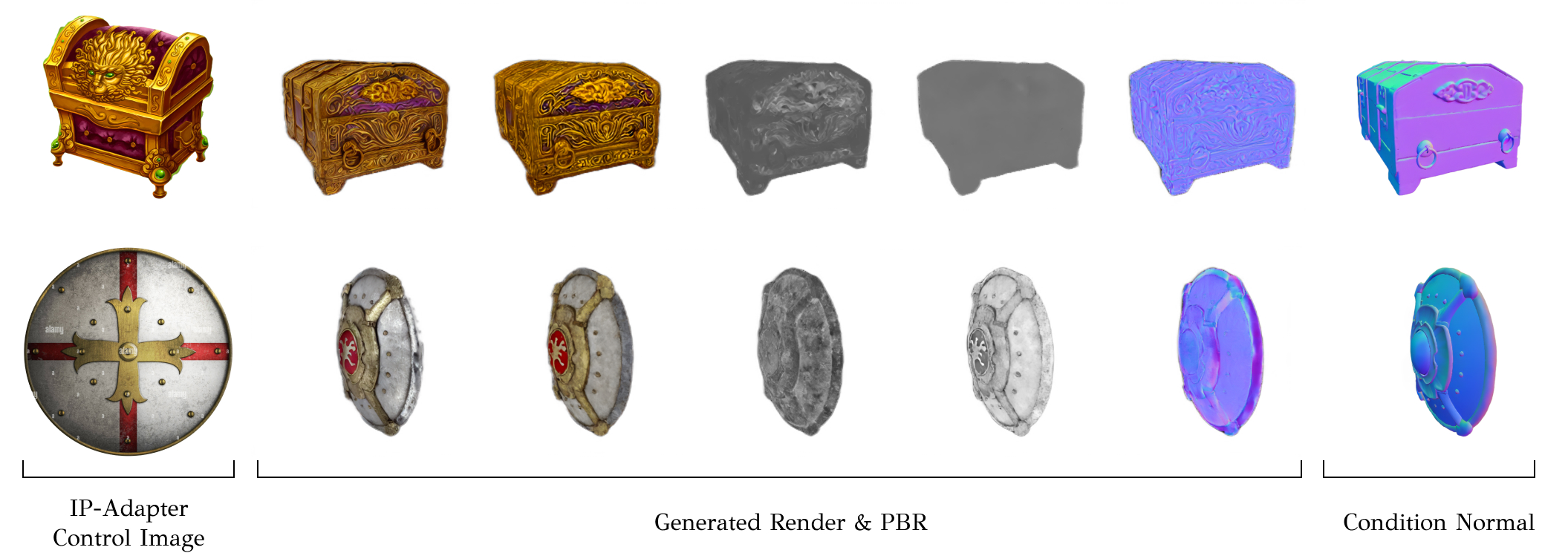

As the base RGB model is fully frozen, the proposed method does not risk catastrophic forgetting during

finetuning and remains compatible with techniques such as IP-Adapter

pretrained for the base RGB model.

We validate our design choices, robustness to data sparsity, and compare against existing paradigms with

an extensive experimental section.

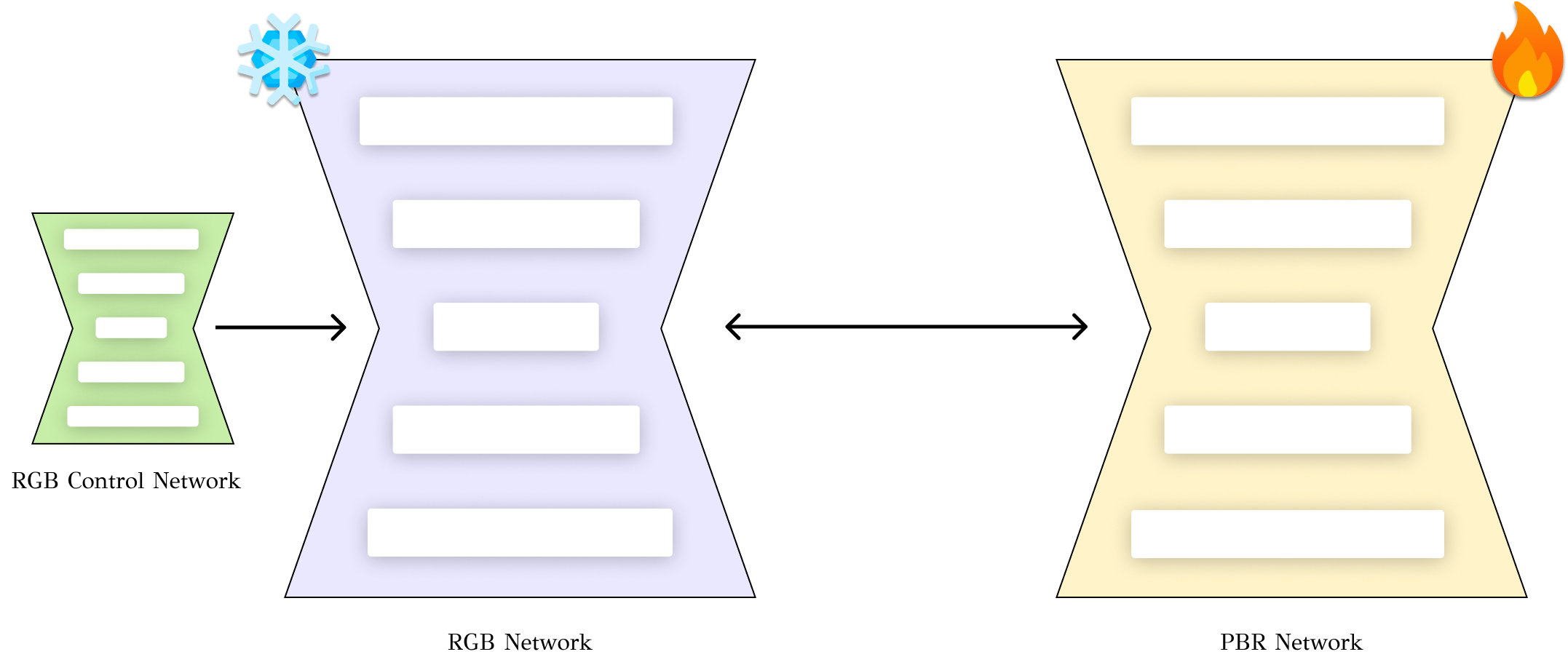

Since our method keeps the RGB model frozen, it is compatible with any control method in a plug &

play fashion.

We have experimented with IP-Adapter, but in the same way, it can be used with any other control

method (T2I Adapter, ControlNet, etc.).

This feature vastly expands the usability of our method in practical scenarios.

We provide an interactive demonstration of our method using Gradio. You can try out our method with your own meshes and prompts and see the results in real-time.

Example meshes are provided below.

Demo currently unavailable.

@article{,

author = {Vainer, Shimon and Boss, Mark and Parger, Mathias and Kutsy, Konstantin and

De Nigris, Dante and Rowles, Ciara and Perony, Nicolas and Donn\'e, Simon},

title = {Collaborative Control for Geometry-Conditioned PBR Image Generation},

journal = {arXiv preprint arXiv:2402.05919},

year = {2024},

}